Telecommunications operator is integrating GPU cloud, AI-RAN and software for AI data centers to evolve into an AI infrastructure provider

BARCELONA, March 2, 2026 /PRNewswire/ — SoftBank Corp. (President & CEO: Junichi Miyakawa, “SoftBank”) announced a new vision, Telco AI Cloud, aimed at building next-generation social infrastructure for the AI era by leveraging its nationwide telecommunications foundation.

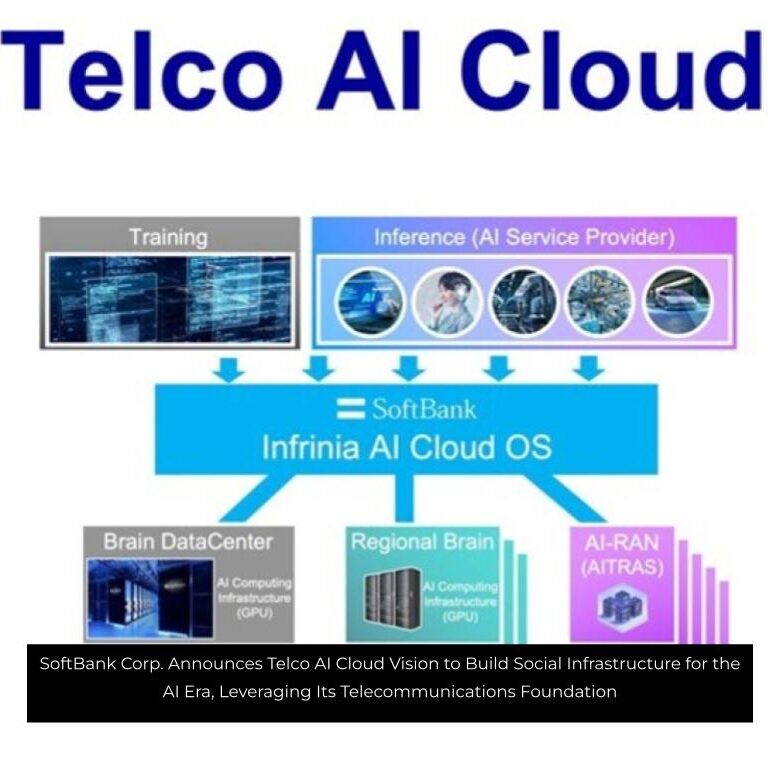

Telco AI Cloud is an AI infrastructure vision that integrates a large-scale AI data center platform powered by a GPU (Graphics Processing Unit) cloud, an AI-RAN-based MEC (Multi-access Edge Computing) platform, and a software stack for AI data centers*1 called “Infrinia AI Cloud OS.” By optimizing AI processing from training to inference and utilizing its nationwide telecommunications infrastructure, SoftBank will build a distributed AI infrastructure that delivers low latency, high reliability, and sovereign capability (data sovereignty). Through its Telco AI Cloud vision, SoftBank aims to evolve beyond the traditional role of a telecommunications operator and become an AI infrastructure provider.

Components of Telco AI Cloud

Telco AI Cloud is a vision uniquely enabled by SoftBank’s position as a telecommunications operator with a nationwide network infrastructure. Unlike hyperscaler-type centralized clouds, it enables the construction of a distributed AI infrastructure embedded within a telecommunications network.

Telco AI Cloud consists primarily of:

- Large-scale AI data centers (GPU cloud) responsible mainly for AI training,

- An AI-RAN-based MEC platform and orchestrator that perform low-latency inference processing, and

- The “Infrinia AI Cloud OS” software stack that centrally manages and integrates these components.

In its AI-RAN product “AITRAS,” SoftBank is developing an orchestrator (“AITRAS Orchestrator”) that monitors in real time the demand for computing resources used for both AI processing and RAN (Radio Access Network) control. Based on multiple indicators—such as resource availability, application requirements, and projected power consumption—the orchestrator dynamically and flexibly allocates resources. Within Telco AI Cloud, RAN itself is managed as a unified AI application, enabling advanced cross-domain control of computing resources across both the telecommunications network and AI processing infrastructure.

In distributed AI infrastructure environments, variations in configurations across sites can create operational complexity. To address this challenge, SoftBank has developed “Infrinia AI Cloud OS,” a software stack that provides integrated management from GPUs and telecommunications networks to Kubernetes*2 and AI workloads. This enables optimized AI processing from training to inference and supports secure, multi-tenant GPU cloud operations.